Daily Shaarli

June 20, 2024

Here lies the internet, murdered by generative AI

Corruption everywhere, even in YouTube's kids content

Erik Hoel Feb 27, 2024

Art for The Intrinsic Perspective is by Alexander Naughton

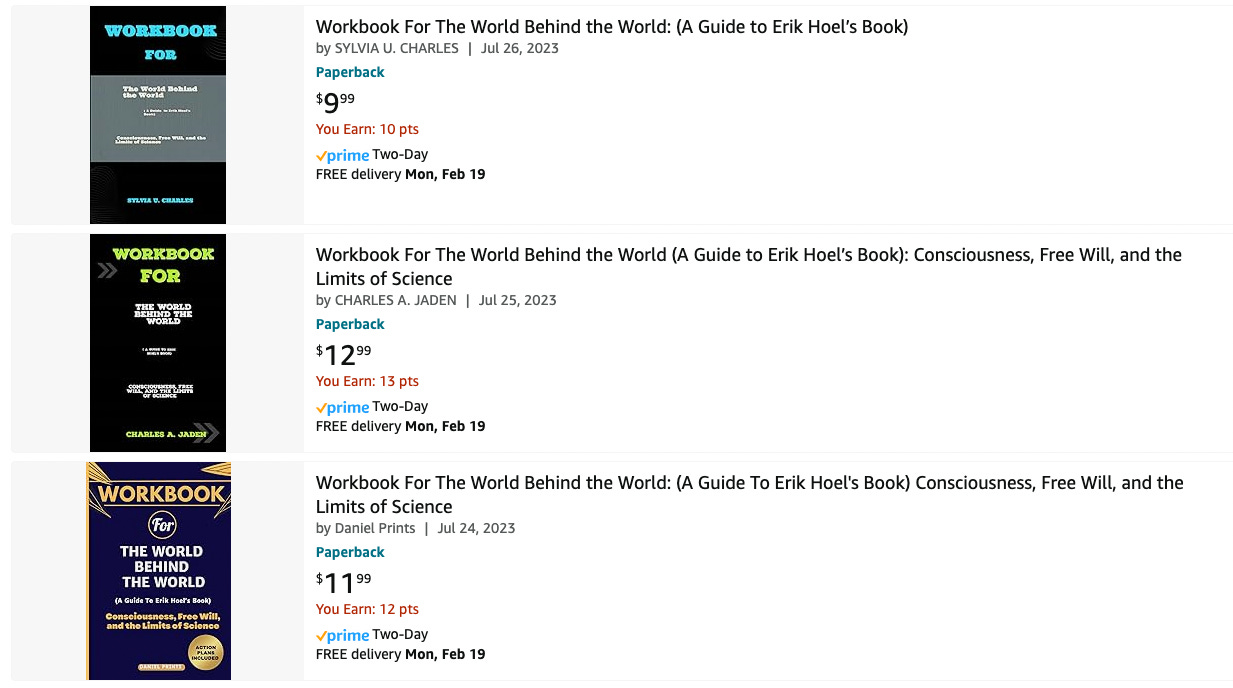

The amount of AI-generated content is beginning to overwhelm the internet. Or maybe a better term is pollute. Pollute its searches, its pages, its feeds, everywhere you look. I’ve been predicting that generative AI would have pernicious effects on our culture since 2019, but now everyone can feel it. Back then I called it the coming “semantic apocalypse.” Well, the semantic apocalypse is here, and you’re being affected by it, even if you don’t know it. A minor personal example: last year I published a nonfiction book, The World Behind the World, and now on Amazon I find this.

What, exactly, are these “workbooks” for my book? AI pollution. Synthetic trash heaps floating in the online ocean. The authors aren’t real people, some asshole just fed the manuscript into an AI and didn’t check when it spit out nonsensical summaries. But it doesn’t matter, does it? A poor sod will click on the $9.99 purchase one day, and that’s all that’s needed for this scam to be profitable since the process is now entirely automatable and costs only a few cents. Pretty much all published authors are affected by similar scams, or will be soon.

Now that generative AI has dropped the cost of producing bullshit to near zero, we see clearly the future of the internet: a garbage dump. Google search? They often lead with fake AI-generated images amid the real things. Post on Twitter? Get replies from bots selling porn. But that’s just the obvious stuff. Look closely at the replies to any trending tweet and you’ll find dozens of AI-written summaries in response, cheery Wikipedia-style repeats of the original post, all just to farm engagement. AI models on Instagram accumulate hundreds of thousands of subscribers and people openly shill their services for creating them. AI musicians fill up YouTube and Spotify. Scientific papers are being AI-generated. AI images mix into historical research. This isn’t mentioning the personal impact too: from now on, every single woman who is a public figure will have to deal with the fact that deepfake porn of her is likely to be made. That’s insane.

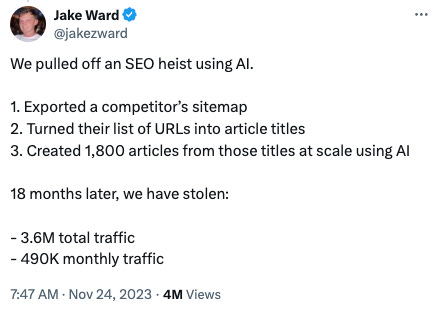

And rather than this being pure skullduggery, people and institutions are willing to embrace low-quality AI-generated content, trying to shift the Overton window to make things like this acceptable:

That’s not hardball capitalism. That’s polluting our culture for your own minor profit. It’s not morally legitimate for the exact same reasons that polluting a river for a competitive edge is not legitimate. Yet name-brand media outlets are embracing generative AI just like SEO-spammers are, for the same reasons.

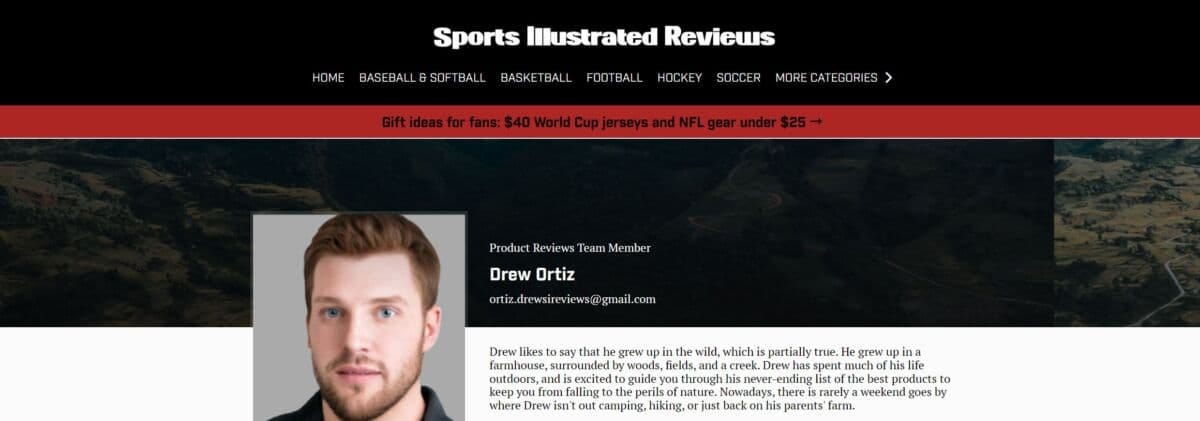

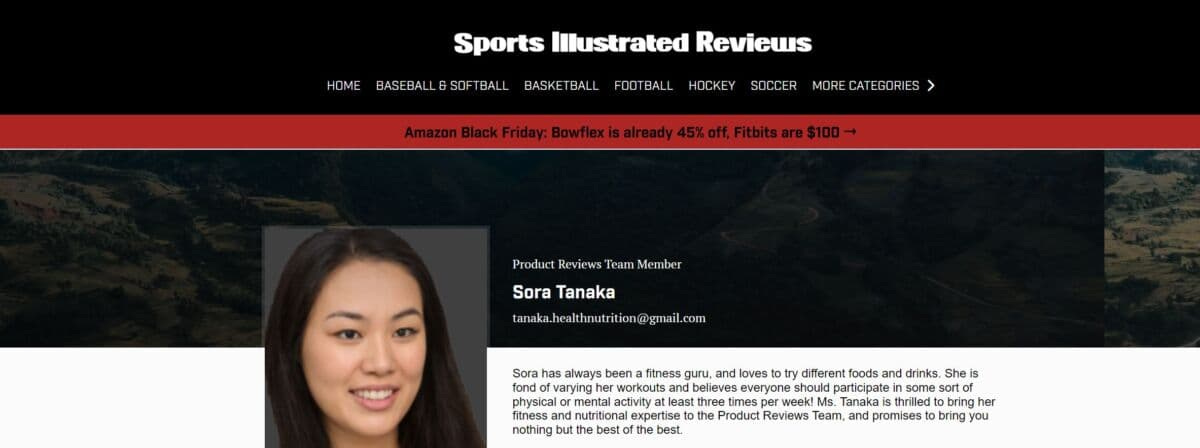

E.g., investigative work at Futurism caught Sports Illustrated red-handed using AI-generated articles written by fake writers. Meet Drew Ortiz.

He doesn’t exist. That face is an AI-generated portrait, which was previously listed for sale on a website. As Futurism describes:

Ortiz isn't the only AI-generated author published by Sports Illustrated, according to a person involved with the creation of the content…

"At the bottom [of the page] there would be a photo of a person and some fake description of them like, 'oh, John lives in Houston, Texas. He loves yard games and hanging out with his dog, Sam.' Stuff like that," they continued. "It's just crazy."

This isn’t what everyone feared, which is AI replacing humans by being better—it’s replacing them because AI is so much cheaper. Sports Illustrated was not producing human-quality level content with these methods, but it was still profitable.

The AI authors' writing often sounds like it was written by an alien; one Ortiz article, for instance, warns that volleyball "can be a little tricky to get into, especially without an actual ball to practice with."

Sports Illustrated, in a classy move, deleted all the evidence. Drew was replace by Sora Tanaka, bearing a face also listed for sale on the same website with the description of a “joyful asian young-adult female with long brown hair and brown eyes.”

Given that even prestigious outlets like The Guardian refuse to put any clear limits on their use of AI, if you notice odd turns of phrase or low-quality articles, the likelihood that they’re written by an AI, or with AI-assistance, is now high.

Sadly, the people affected the most by generative AI are the ones who can’t defend themselves. Because they don’t even know what AI is. Yet we’ve abandoned them to swim in polluted information currents. I’m talking, unfortunately, about toddlers. Because let me introduce you to…

the hell that is AI-generated children’s YouTube content.

YouTube for kids is quickly becoming a stream of synthetic content. Much of it now consists of wooden digital characters interacting in short nonsensical clips without continuity or purpose. Toddlers are forced to sit and watch this runoff because no one is paying attention. And the toddlers themselves can’t discern that characters come and go and that the plots don’t make sense and that it’s all just incoherent dream-slop. The titles don’t match the actual content, and titles that are all the parents likely check, because they grew up in a culture where if a YouTube video said BABY LEARNING VIDEOS and had a million views it was likely okay. Now, some of the nonsense AI-generated videos aimed at toddlers have tens of millions of views.

Here’s a behind-the-scenes video on a single channel that made 1.2 million dollars via AI-generated “educational content” aimed at toddlers.

As the video says:

These kids, when they watch these kind of videos, they watch them over and over and over again.

They aren’t confessing. They’re bragging. And the particular channel they focus on isn’t even the worst offender—at least that channel’s content mostly matches the subheadings and titles, even if the videos are jerky, strange, off-putting, repetitious, clearly inhuman. Other channels, which are also obviously AI-generated, get worse and worse. Here’s a “kid’s education” channel that is AI-generated (took about one minute to find) with 11.7 million subscribers.

They don’t use proper English, and after quickly going through some shapes like the initial video title promises (albeit doing it in a way that makes you feel like you’re going insane) the rest of the video devolves into randomly-generated rote tasks, eerie interactions, more incorrect grammar, and uncanny musical interludes of songs that serve no purpose but to pad the time. It is the creation of an alien mind.

Here’s an example of the next frontier: completely start-to-finish AI-generated music videos for toddlers. Below is a how-to video for these new techniques. The result? Nightmarish parrots with twisted double-beaks and four mutated eyes singing artificial howls from beyond. Click and behold (or don’t, if you want to sleep tonight).

All around the nation there are toddlers plunked down in front of iPads being subjected to synthetic runoff, deprived of human contact even in the media they consume. There’s no other word but dystopian. Might not actual human-generated cultural content normally contain cognitive micro-nutrients (like cohesive plots and sentences, detailed complexity, reasons for transitions, an overall gestalt, etc) that the human mind actually needs? We’re conducting this experiment live. For the first time in history developing brains are being fed choppy low-grade and cheaply-produced synthetic data created en masse by generative AI, instead of being fed with real human culture. No one knows the effects, and no one appears to care. Especially not the companies, because…

OpenAI has happily allowed pollution.

Why blame them, specifically? Well, first of all, their massive impact—e.g., most of the kids videos are built from scripts generated by ChatGPT. And more generally, what AI capabilities are considered okay to deploy has long been a standard set by OpenAI. Despite their supposed safety focus, OpenAI failed to foresee that its creations would thoroughly pollute the internet across all platforms and services. You can see this failure in how they assessed potential negative outcomes in the announcement of GPT-2 on their blog, back in 2019. While they did warn that these models could have serious longterm consequences for the information ecosystem, the specifics they were concerned with were things like:

Generate misleading news articles

Impersonate others online

Automate the production of abusive or faked content to post on social media

Automate the production of spam/phishing content

This may sound kind of in line with what’s happened, but if you read further, it becomes clear that what they meant by “faked content” was mainly malicious actors promoting misinformation, or the same shadowy malicious actors using AI to phish for passwords, etc.

These turned out to be only minor concerns compared to AI’s cultural pollution. OpenAI kept talking about “actors” when they should have been talking about “users.” Because it turns out, all AI-generated content is fake! Or it’s all kind of fake. AI-written websites, now sprouting up like an unstoppable invasive species, don’t necessarily have an intent to mislead; it’s just that AI content is low-effort banalities generated for pennies, so you can SEO spam and do all sorts of manipulative games around search to attract eyeballs and ad revenue.

That is, the OpenAI team didn’t stop to think that regular users just generating mounds of AI-generated content on the internet would have very similar negative effects to as if there were a lot of malicious use by intentional bad actors. Because there’s no clear distinction! The fact that OpenAI was both honestly worried about negative effects, and at the same time didn’t predict the enshittification of the internet they spearheaded, should make us extremely worried they will continue to miss the negative downstream effects of their increasingly intelligent models. They failed to foresee the floating mounds of clickbait garbage, the synthetic info-trash cities, all to collect clicks and eyeballs—even from innocent children who don’t know any better. And they won’t do anything to stop it, because…

AI pollution is a tragedy of the commons.

This term, "tragedy of the commons,” originated in the rising environmentalism of the 20th century, and would lead to many of the regulations that keep our cities free of smog and our rivers clean. Garrett Hardin, an ecologist and biologist, coined it in an article in [Science](https://math.uchicago.edu/~shmuel/Modeling/Hardin, Tragedy of the Commons.pdf) in 1968. The article is still instructively relevant. Hardin wrote:

An implicit and almost universal assumption of discussions published in professional and semipopular scientific journals is that the problem under discussion has a technical solution…

He goes on to discuss several problems for which there are no technical solutions, since rational actors will drive the system toward destruction via competition:

The tragedy of the commons develops in this way. Picture a pasture open to all. It is to be expected that each herdsman will try to keep as many cattle as possible on the commons. Such an arrangement may work reasonably satisfactorily for centuries because tribal wars, poaching, and disease keep the numbers of both man and beast well below the carrying capacity of the land. Finally, however, comes the day of reckoning, that is, the day when the long-desired goal of social stability becomes a reality. At this point, the inherent logic of the commons remorselessly generates tragedy.

One central example of Hardin’s became instrumental to the environmental movement.

… the tragedy of the commons reappears in problems of pollution. Here it is not a question of taking something out of the commons, but of putting something in—sewage, or chemical, radioactive, and heat wastes into water; noxious and dangerous fumes into the air; and distracting and unpleasant advertising signs into the line of sight. The calculations of utility are much the same as before. The rational man finds that his share of the cost of the wastes he discharges into the commons is less than the cost of purifying his wastes before releasing them. Since this is true for everyone, we are locked into a system of "fouling our own nest," so long as we behave only as independent, rational, free-enterprisers.

We are currently fouling our own nests. Since the internet economy runs on eyeballs and clicks the new ability of anyone, anywhere, to easily generate infinite low-quality content via AI is now remorselessly generating tragedy.

The solution, as Hardin noted, isn’t technical. You can’t detect AI outputs reliably anyway (another initial promise that OpenAI abandoned). The companies won’t self regulate, given their massive financial incentives. We need the equivalent of a Clean Air Act: a Clean Internet Act. We can’t just sit by and let human culture end up buried.

Luckily we’re on the cusp of all that incredibly futuristic technology promised by AI. Any day now, our GDP will start to rocket forward. In fact, soon we’ll cure all disease, even aging itself, and have robot butlers and Universal Basic Income and high-definition personalized entertainment. Who cares if toddlers had to watch inhuman runoff for a few billion years of viewing-time to make the future happen? It was all worth it. Right? Let’s wait a little bit longer. If we wait just a little longer utopia will surely come.

Why the Internet Isn’t Fun Anymore

The social-media Web as we knew it, a place where we consumed the posts of our fellow-humans and posted in return, appears to be over.

By Kyle Chayka October 9, 2023

Lately on X, the platform formerly known as Twitter, my timeline is filled with vapid posts orbiting the same few topics like water whirlpooling down a drain. Last week, for instance, the chatter was dominated by talk of Taylor Swift’s romance with the football player Travis Kelce. If you tried to talk about anything else, the platform’s algorithmic feed seemed to sweep you into irrelevance. Users who pay for Elon Musk’s blue-check verification system now dominate the platform, often with far-right-wing commentary and outright disinformation; Musk rewards these users monetarily based on the engagement that their posts drive, regardless of their veracity. The decay of the system is apparent in the spread of fake news and mislabelled videos related to Hamas’s attack on Israel.

Elsewhere online, things are similarly bleak. Instagram’s feed pushes months-old posts and product ads instead of photos from friends. Google search is cluttered with junky results, and S.E.O. hackers have ruined the trick of adding “Reddit” to searches to find human-generated answers. Meanwhile, Facebook’s parent company, Meta, in its latest bid for relevance, is reportedly developing artificial-intelligence chatbots with various “sassy” personalities that will be added to its apps, including a role-playing D. & D. Dungeon Master based on Snoop Dogg. The prospect of interacting with such a character sounds about as appealing as texting with one of those spam bots that asks you if they have the right number.

The social-media Web as we knew it, a place where we consumed the posts of our fellow-humans and posted in return, appears to be over. The precipitous decline of X is the bellwether for a new era of the Internet that simply feels less fun than it used to be. Remember having fun online? It meant stumbling onto a Web site you’d never imagined existed, receiving a meme you hadn’t already seen regurgitated a dozen times, and maybe even playing a little video game in your browser. These experiences don’t seem as readily available now as they were a decade ago. In large part, this is because a handful of giant social networks have taken over the open space of the Internet, centralizing and homogenizing our experiences through their own opaque and shifting content-sorting systems. When those platforms decay, as Twitter has under Elon Musk, there is no other comparable platform in the ecosystem to replace them. A few alternative sites, including Bluesky and Discord, have sought to absorb disaffected Twitter users. But like sproutlings on the rain-forest floor, blocked by the canopy, online spaces that offer fresh experiences lack much room to grow.

One Twitter friend told me, of the platform’s current condition, “I’ve actually experienced quite a lot of grief over it.” It may seem strange to feel such wistfulness about a site that users habitually referred to as a “hellsite.” But I’ve heard the same from many others who once considered Twitter, for all its shortcomings, a vital social landscape. Some of them still tweet regularly, but their messages are less likely to surface in my Swift-heavy feed. Musk recently tweeted that the company’s algorithm “tries to optimize time spent on X” by, say, boosting reply chains and downplaying links that might send people away from the platform. The new paradigm benefits tech-industry “thread guys,” prompt posts in the “what’s your favorite Marvel movie” vein, and single-topic commentators like Derek Guy, who tweets endlessly about menswear. Algorithmic recommendations make already popular accounts and subjects even more so, shutting out the smaller, more magpie-ish voices that made the old version of Twitter such a lively destination. (Guy, meanwhile, has received so much algorithmic promotion under Musk that he accumulated more than half a million followers.)

The Internet today feels emptier, like an echoing hallway, even as it is filled with more content than ever. It also feels less casually informative. Twitter in its heyday was a source of real-time information, the first place to catch wind of developments that only later were reported in the press. Blog posts and TV news channels aggregated tweets to demonstrate prevailing cultural trends or debates. Today, they do the same with TikTok posts—see the many local-news reports of dangerous and possibly fake “TikTok trends”—but the TikTok feed actively dampens news and political content, in part because its parent company is beholden to the Chinese government’s censorship policies. Instead, the app pushes us to scroll through another dozen videos of cooking demonstrations or funny animals. In the guise of fostering social community and user-generated creativity, it impedes direct interaction and discovery.

According to Eleanor Stern, a TikTok video essayist with nearly a hundred thousand followers, part of the problem is that social media is more hierarchical than it used to be. “There’s this divide that wasn’t there before, between audiences and creators,” Stern said. The platforms that have the most traction with young users today—YouTube, TikTok, and Twitch—function like broadcast stations, with one creator posting a video for her millions of followers; what the followers have to say to one another doesn’t matter the way it did on the old Facebook or Twitter. Social media “used to be more of a place for conversation and reciprocity,” Stern said. Now conversation isn’t strictly necessary, only watching and listening.

Posting on social media might be a less casual act these days, as well, because we’ve seen the ramifications of blurring the border between physical and digital lives. Instagram ushered in the age of self-commodification online—it was the platform of the selfie—but TikTok and Twitch have turbocharged it. Selfies are no longer enough; video-based platforms showcase your body, your speech and mannerisms, and the room you’re in, perhaps even in real time. Everyone is forced to perform the role of an influencer. The barrier to entry is higher and the pressure to conform stronger. It’s no surprise, in this environment, that fewer people take the risk of posting and more settle into roles as passive consumers.

The patterns of life offscreen affect the makeup of the digital world, too. Having fun online was something that we used to do while idling in office jobs: stuck in front of computers all day, we had to find something on our screens to fill the down time. An earlier generation of blogs such as the Awl and Gawker seemed designed for aimless Internet surfing, delivering intermittent gossip, amusing videos, and personal essays curated by editors with quirky and individuated tastes. (When the Awl closed, in 2017, Jia Tolentino lamented the demise of “online freedom and fun.”) Now, in the aftermath of the pandemic, amid ongoing work-from-home policies, office workers are less tethered to their computers, and perhaps thus less inclined to chase likes on social media. They can walk away from their desks and take care of their children, walk their dog, or put their laundry in. This might have a salutary effect on individuals, but it means that fewer Internet-obsessed people are furiously creating posts for the rest of us to consume. The user growth rate of social platforms over all has slowed over the past several years; according to one estimate, it is down to 2.4 per cent in 2023.

That earlier generation of blogs once performed the task of aggregating news and stories from across the Internet. For a while, it seemed as though social-media feeds could fulfill that same function. Now it’s clear that the tech companies have little interest in directing users to material outside of their feeds. According to Axios, the top news and media sites have seen “organic referrals” from social media drop by more than half over the past three years. As of last week, X no longer displays the headlines for articles that users link to. The decline in referral traffic disrupts media business models, further degrading the quality of original content online. The proliferation of cheap, instant A.I.-generated content promises to make the problem worse.

Choire Sicha, the co-founder of the Awl and now an editor at New York, told me that he traces the seeds of social media’s degradation back a decade. “If I had a time machine I’d go back and assassinate 2014,” he said. That was the year of viral phenomena such as Gamergate, when a digital mob of disaffected video-game fans targeted journalists and game developers on social media; Ellen DeGeneres’s selfie with a gaggle of celebrities at the Oscars, which got retweeted millions of times; and the brief, wondrous fame of Alex, a random teen retail worker from Texas who won attention for his boy-next-door appearance. In those events, we can see some of the nascent forces that would solidify in subsequent years: the tyranny of the loudest voices; the entrenchment of traditional fame on new platforms; the looming emptiness of the content that gets most furiously shared and promoted. But at that point they still seemed like exceptions rather than the rule.

I have been trying to recall the times I’ve had fun online unencumbered by anonymous trolling, automated recommendations, or runaway monetization schemes. It was a long time ago, before social networks became the dominant highways of the Internet. What comes to mind is a Web site called Orisinal that hosted games made with Flash, the late interactive animation software that formed a significant part of the kitschy Internet of the two-thousands, before everyone began posting into the same platform content holes. The games on the site were cartoonish, cute, and pastel-colored, involving activities like controlling a rabbit jumping on stars into the sky or helping mice make a cup of tea. Orisinal was there for anyone to stumble upon, without the distraction of follower counts or sponsored content. You could e-mail the site to a friend, but otherwise there was nothing to share. That old version of the Internet is still there, but it’s been eclipsed by the modes of engagement that the social networks have incentivized. Through Reddit, I recently dug up an emulator of all the Orisinal games and quickly got absorbed into one involving assisting deer leaping across a woodland gap. My only reward was a personal high score. But it was more satisfying, and less lonely, than the experience these days on X. ♦

Underage Workers Are Training AI

Companies that provide Big Tech with AI data-labeling services are inadvertently hiring young teens to work on their platforms, often exposing them to traumatic content.

Like most kids his age, 15-year-old Hassan spent a lot of time online. Before the pandemic, he liked playing football with local kids in his hometown of Burewala in the Punjab region of Pakistan. But Covid lockdowns made him something of a recluse, attached to his mobile phone. “I just got out of my room when I had to eat something,” says Hassan, now 18, who asked to be identified under a pseudonym because he was afraid of legal action. But unlike most teenagers, he wasn’t scrolling TikTok or gaming. From his childhood bedroom, the high schooler was working in the global artificial intelligence supply chain, uploading and labeling data to train algorithms for some of the world’s largest AI companies.

The raw data used to train machine-learning algorithms is first labeled by humans, and human verification is also needed to evaluate their accuracy. This data-labeling ranges from the simple—identifying images of street lamps, say, or comparing similar ecommerce products—to the deeply complex, such as content moderation, where workers classify harmful content within data scraped from all corners of the internet. These tasks are often outsourced to gig workers, via online crowdsourcing platforms such as Toloka, which was where Hassan started his career.

A friend put him on to the site, which promised work anytime, from anywhere. He found that an hour’s labor would earn him around $1 to $2, he says, more than the national minimum wage, which was about $0.26 at the time. His mother is a homemaker, and his dad is a mechanical laborer. “You can say I belong to a poor family,” he says. When the pandemic hit, he needed work more than ever. Confined to his home, online and restless, he did some digging, and found that Toloka was just the tip of the iceberg.

“AI is presented as a magical box that can do everything,” says Saiph Savage, director of Northeastern University’s Civic AI Lab. “People just simply don’t know that there are human workers behind the scenes.”

At least some of those human workers are children. Platforms require that workers be over 18, but Hassan simply entered a relative’s details and used a corresponding payment method to bypass the checks—and he wasn’t alone in doing so. WIRED spoke to three other workers in Pakistan and Kenya who said they had also joined platforms as minors, and found evidence that the practice is widespread.

“When I was still in secondary school, so many teens discussed online jobs and how they joined using their parents' ID,” says one worker who joined Appen at 16 in Kenya, who asked to remain anonymous. After school, he and his friends would log on to complete annotation tasks late into the night, often for eight hours or more.

Appen declined to give an attributable comment.

“If we suspect a user has violated the User Agreement, Toloka will perform an identity check and request a photo ID and a photo of the user holding the ID,” Geo Dzhikaev, head of Toloka operations, says.

Driven by a global rush into AI, the global data labeling and collection industry is expected to grow to over $17.1 billion by 2030, according to Grand View Research, a market research and consulting company. Crowdsourcing platforms such as Toloka, Appen, Clickworker, Teemwork.AI, and OneForma connect millions of remote gig workers in the global south to tech companies located in Silicon Valley. Platforms post micro-tasks from their tech clients, which have included Amazon, Microsoft Azure, Salesforce, Google, Nvidia, Boeing, and Adobe. Many platforms also partner with Microsoft’s own data services platform, the Universal Human Relevance System (UHRS).

These workers are predominantly based in East Africa, Venezuela, Pakistan, India, and the Philippines—though there are even workers in refugee camps, who label, evaluate, and generate data. Workers are paid per task, with remuneration ranging from a cent to a few dollars—although the upper end is considered something of a rare gem, workers say. “The nature of the work often feels like digital servitude—but it's a necessity for earning a livelihood,” says Hassan, who also now works for Clickworker and Appen.

Sometimes, workers are asked to upload audio, images, and videos, which contribute to the data sets used to train AI. Workers typically don’t know exactly how their submissions will be processed, but these can be pretty personal: On Clickworker’s worker jobs tab, one task states: “Show us you baby/child! Help to teach AI by taking 5 photos of your baby/child!” for €2 ($2.15). The next says: “Let your minor (aged 13-17) take part in an interesting selfie project!”

Some tasks involve content moderation—helping AI distinguish between innocent content and that which contains violence, hate speech, or adult imagery. Hassan shared screen recordings of tasks available the day he spoke with WIRED. One UHRS task asked him to identify “fuck,” “c**t,” “dick,” and “bitch” from a body of text. For Toloka, he was shown pages upon pages of partially naked bodies, including sexualized images, lingerie ads, an exposed sculpture, and even a nude body from a Renaissance-style painting. The task? Decipher the adult from the benign, to help the algorithm distinguish between salacious and permissible torsos.

Hassan recalls moderating content while under 18 on UHRS that, he says, continues to weigh on his mental health. He says the content was explicit: accounts of rape incidents, lifted from articles quoting court records; hate speech from social media posts; descriptions of murders from articles; sexualized images of minors; naked images of adult women; adult videos of women and girls from YouTube and TikTok.

Many of the remote workers in Pakistan are underage, Hassan says. He conducted a survey of 96 respondents on a Telegram group chat with almost 10,000 UHRS workers, on behalf of WIRED. About a fifth said they were under 18.

Awais, 20, from Lahore, who spoke on condition that his first name not be published, began working for UHRS via Clickworker at 16, after he promised his girlfriend a birthday trip to the turquoise lakes and snow-capped mountains of Pakistan’s northern region. His parents couldn’t help him with the money, so he turned to data work, joining using a friend’s ID card. “It was easy,” he says.

He worked on the site daily, primarily completing Microsoft’s “Generic Scenario Testing Extension” task. This involved testing homepage and search engine accuracy. In other words, did selecting “car deals” on the MSN homepage bring up photos of cars? Did searching “cat” on Bing show feline images? He was earning $1 to $3 each day, but he found the work both monotonous and infuriating. At times he found himself working 10 hours for $1, because he had to do unpaid training to access certain tasks. Even when he passed the training, there might be no task to complete; or if he breached the time limit, they would suspend his account, he says. Then seemingly out of nowhere, he got banned from performing his most lucrative task—something workers say happens regularly. Bans can occur for a host of reasons, such as giving incorrect answers, answering too fast, or giving answers that deviate from the average pattern of other workers. He’d earned $70 in total. It was almost enough to take his high school sweetheart on the trip, so Awais logged off for good.

Clickworker did not respond to requests for comment. Microsoft declined to comment.

“In some instances, once a user finishes the training, the quota of responses has already been met for that project and the task is no longer available,” Dzhikaev said. “However, should other similar tasks become available, they will be able to participate without further training.”

Researchers say they’ve found evidence of underage workers in the AI industry elsewhere in the world. Julian Posada, assistant professor of American Studies at Yale University, who studies human labor and data production in the AI industry, says that he’s met workers in Venezuela who joined platforms as minors.

Bypassing age checks can be pretty simple. The most lenient platforms, like Clickworker and Toloka, simply ask workers to state they are over 18; the most secure, such as Remotasks, employ face recognition technology to match workers to their photo ID. But even that is fallible, says Posada, citing one worker who says he simply held the phone to his grandmother’s face to pass the checks. The sharing of a single account within family units is another way minors access the work, says Posada. He found that in some Venezuelan homes, when parents cook or run errands, children log on to complete tasks. He says that one family of six he met, with children as young as 13, all claimed to share one account. They ran their home like a factory, Posada says, so that two family members were at the computers working on data labeling at any given point. “Their backs would hurt because they have been sitting for so long. So they would take a break, and then the kids would fill in,” he says.

The physical distances between the workers training AI and the tech giants at the other end of the supply chain—“the deterritorialization of the internet,” Posada calls it—creates a situation where whole workforces are essentially invisible, governed by a different set of rules, or by none.

The lack of worker oversight can even prevent clients from knowing if workers are keeping their income. One Clickworker user in India, who requested anonymity to avoid being banned from the site, told WIRED he “employs” 17 UHRS workers in one office, providing them with a computer, mobile, and internet, in exchange for half their income. While his workers are aged between 18 and 20, due to Clickworker’s lack of age certification requirements, he knows of teenagers using the platform.

In the more shadowy corners of the crowdsourcing industry, the use of child workers is overt.

Captcha (Completely Automated Public Turing test to tell Computers and Humans Apart) solving services, where crowdsourcing platforms pay humans to solve captchas, are a less understood part in the AI ecosystem. Captchas are designed to distinguish a bot from a human—the most notable example being Google’s reCaptcha, which asks users to identify objects in images to enter a website. The exact purpose of services that pay people to solve them remains a mystery to academics, says Posada. “But what I can confirm is that many companies, including Google's reCaptcha, use these services to train AI models,” he says. “Thus, these workers indirectly contribute to AI advancements.”

Google did not respond to a request for comment in time for publication.

There are at least 152 active services, mostly based in China, with more than half a million people working in the underground reCaptcha market, according to a 2019 study by researchers from Zhejiang University in Hangzhou.

“Stable job for everyone. Everywhere,” one service, Kolotibablo, states on its website. The company has a promotional website dedicated to showcasing its worker testimonials, which includes images of young children from across the world. In one, a smiling Indonesian boy shows his 11th birthday cake to the camera. “I am very happy to be able to increase my savings for the future,” writes another, no older than 7 or 8. A 14-year-old girl in a long Hello Kitty dress shares a photo of her workstation: a laptop on a pink, Barbie-themed desk.

Not every worker WIRED interviewed felt frustrated with the platforms. At 17, most of Younis Hamdeen’s friends were waiting tables. But the Pakistani teen opted to join UHRS via Appen instead, using the platform for three or four hours a day, alongside high school, earning up to $100 a month. Comparing products listed on Amazon was the most profitable task he encountered. “I love working for this platform,” Hamdeen, now 18, says, because he is paid in US dollars—which is rare in Pakistan—and so benefits from favorable exchange rates.

But the fact that the pay for this work is incredibly low compared to the wages of in-house employees of the tech companies, and that the benefits of the work flow one way—from the global south to the global north, leads to uncomfortable parallels. “We do have to consider the type of colonialism that is being promoted with this type of work,” says the Civic AI Lab’s Savage.

Hassan recently got accepted to a bachelor’s program in medical lab technology. The apps remain his sole income, working an 8 am to 6 pm shift, followed by 2 am to 6 am. However, his earnings have fallen to just $100 per month, as demand for tasks has outstripped supply, as more workers have joined since the pandemic.

He laments that UHRS tasks can pay as little as 1 cent. Even on higher-paid jobs, such as occasional social media tasks on Appen, the amount of time he needs to spend doing unpaid research means he needs to work five or six hours to complete an hour of real-time work, all to earn $2, he says.

“It’s digital slavery,” says Hassan.

L’effondrement de l’information ?

Depuis Cambridge Analytica, Trump, le Brexit et le Covid, l’information est devenue un problème pour les réseaux sociaux… Sommés par les autorités d’arbitrer la vérité, la plupart d’entre eux semblent désormais se réfugier en-dehors de l’information, pour devenir des lieux d’accomplissement de soi rétifs à la politique. C’est certainement ce qui explique le recul de l’information dans les flux des utilisateurs, analyse pertinemment Charlie Warzel pour The Atlantic. Comme le déclarait récemment le New York Times : « Les principales plateformes en ligne sont en train de rompre avec l’information ».

Les plateformes de réseaux sociaux ont longtemps influencé la distribution de l’information, par exemple, en poussant les médias à se tourner vers la vidéo, comme l’a fait Facebook en 2015, en surestimant volontairement le temps moyen que les utilisateurs passaient à regarder des vidéos pour pousser les médias à basculer vers la production de contenus vidéos. Aujourd’hui, elles se détournent de l’information pour le divertissement et la publicité. Mais il n’y a pas qu’elles, les lecteurs eux-mêmes semblent atteindre un plafond informationnel, qui les pousse à se détourner de l’info, rapporte le Pew Research Center. La consommation d’information, particulièrement anxiogène, a plongé depuis 2020. Beaucoup se sont tournés vers des contenus plus faciles, comme ceux produits par les influenceurs. “La confiance des consommateurs ne repose pas nécessairement sur la qualité du reportage ou sur le prestige et l’histoire de la marque, mais sur des relations parasociales fortes”, constate Warzel. En 2014 – l’époque faste de l’actualité sociale – 75 % des adultes américains interrogés par le Pew déclaraient qu’Internet et les médias sociaux les avaient aidés à se sentir plus informés. Ce n’est plus le cas.

Avec l’accélération algorithmique de l’information dans les réseaux sociaux, les cycles d’actualité sont devenus plus rapides : Twitter est ainsi devenu le rédacteur en chef des sujets les plus chauds que les médias devaient traiter, dans une boucle de renforcement des sujets populaires, à l’image des tweets de Donald Trump que tous les médias commentaient. De 2013 à 2017, l’actualité est devenue l’essence faisant tourner les réseaux sociaux, transformant peu à peu l’information en champ de bataille… Beaucoup d’utilisateurs s’en sont alors détournés. De nouveaux réseaux sociaux ont explosé, à l’image de TikTok et les plus anciens réseaux se sont adaptés, Facebook notamment… Une récente enquête de Morning Consult a montré que « les gens aimaient davantage Facebook maintenant qu’il y avait moins d’actualité ».

Les commentaires sur l’actualité comme l’information ne vont pas entièrement disparaître, estime Warzel, mais les médias viennent de perdre de leur influence culturelle. Pour John Herrman dans le New Yorker, la campagne présidentielle de 2024 aux Etats-Unis risque d’être la première sans médias pour façonner les grands récits politiques. “Les réseaux sociaux ont fait ressortir le pire dans le secteur de l’information, et les informations, à leur tour, ont fait ressortir le pire dans de nombreux réseaux sociaux”. L’alliance entre réseaux sociaux et information a vécu. Reste à savoir ce que le monde de l’influence va produire… dans un monde où la force de l’écrit et la structuration de l’information semblent s’estomper du fait de machines à recommandation qui ne sont plus bâties pour eux.

La fin d’un monde commun

Dans un second article, Warzel revient sur cette disparition de l’information… Pour lui, l’internet est désormais fragmenté par les recommandations sociales qui font que nous ne partageons pas grand-chose de ce que les autres consomment. “La notion même de popularité est sujette à débat” : plus personne ne sait vraiment si telle tendance est aussi virale qu’affichée. Difficultés à comparer les métriques, recommandations opaques, fermeture des sites d’information par les paywalls, chute de la pertinence des informations sur les médias sociaux et envahissement publicitaire… Nous ne comprenons plus ce qu’il se passe en ligne. Vous n’avez probablement jamais vu les vidéos les plus populaires de TikTok de l’année, pas plus que les contenus les plus vus de Facebook ! Et pas grand monde n’avait parlé de l’émission la plus populaire de Netflix, The Night Agent ! D’un côté, les contenus populaires sont plus viraux que jamais, de l’autre ces popularités sont plus cloisonnées que jamais ! Les comparaisons d’audience entre contenus et plateformes deviennent particulièrement complexes à décoder. Par exemple, la polémique récente sur le succès d’audience auprès de jeunes américains d’un discours de Ben Laden n’a pas été aussi virale que beaucoup l’ont dit, comme l’ont démontré le Washington Post ou Ryan Broderick. Un peu comme si nous étions entrés dans un moment de grande confusion sur la viralité, avec des métriques de vues que l’on compare d’une plateforme l’autre, alors que leurs publics et principes d’auto-renforcement sont très différents. Le fait que les plateformes ferment l’accès à leurs métriques et à la recherche n’aide pas à y voir clair, bien sûr. Sans échelle de comparaison, sans moyens pour voir ce qui circule et comment, nous devenons aveugles à tous les phénomènes. Et notamment à l’un d’entre eux : la manipulation de l’information par des puissances étrangères…

Ces transformations ne sont pas encore achevées ni digérées qu’une autre se profile, estimait James Vincent pour The Verge : “l’ancien web est en train de mourir et le nouveau web a du mal à naître”. La production de textes, d’images, de vidéos et de sons synthétiques vient parasiter cet écosystème en recomposition. Accessibles directement depuis les moteurs de recherches, les productions de l’IA viennent remplacer le trafic qui menait jusqu’à l’information. “L’IA vise à produire du contenu bon marché depuis le travail d’autrui”. Bing AI ou Bard de Google pourraient finalement venir tuer l’écosystème qui a fait la valeur des moteurs de recherche, en proposant eux-même leur propre “abondance artificielle”. Certes, ce ne sera pas la première fois que l’écosystème de l’information se modifie : Wikipédia a bien tué l’Encyclopédie Britannica. Mais, pour James Vincent, si depuis l’origine le web structure la grande bataille de l’information en modifiant les producteurs, les modalités d’accès et les modèles économiques… cette nouvelle configuration qui s’annonce ne garantit pas que le système qui arrive soit meilleur que celui que nous avions.

“L’internet n’est plus amusant”, déplorait Kyle Chayka pour le New Yorker. A force d’ajustements algorithmiques, les réseaux sociaux sont devenus parfaitement chiants !, expliquait Marie Turcan de Numérama, dénonçant le web de l’ennui ! L’invisibilisation des liens externes et plus encore de l’écrit par rapport à la vidéo, semble achever ce qu’il restait de qualité, comme le rapporte David-Julien Rahmil pour l’ADN. Dans un autre article, Rahmil rappelle que les échanges directs ont pris le pas sur les échanges publics : “La publicité omniprésente, l’exacerbation des tensions politiques, la culture du clash perpétuel et la sensation de burn-out informationnel ont sans doute précipité la chute des grandes plateformes sociales.” Désormais, chaque plateforme ne travaille plus que pour elle-même. Dans une internet plus fragmenté que jamais, chaque plateforme va faire émerger ses propres professionnels, ses propres influenceurs et il est bien probable qu’ils ne se recoupent plus d’une plateforme l’autre.

Quant aux réseaux sociaux, ils se sont dévalorisés eux-mêmes, à l’image de Twitter, qui a longtemps incarné le fil d’actualité en temps réel, le lieu central d’une conversation influente et un peu élitiste, explique Nilay Patel pour The Verge. C’est “l’effondrement du contexte qui a rendu Twitter si dangereux et si réducteur, mais c’était aussi ce qui le rendait passionnant”. La plateforme a rendu ses utilisateurs plus rapides et plus agiles, mais également trop réactifs. Les marques se sont éloignées des médias pour gérer elles-mêmes leurs présences sociales. “En prenant du recul maintenant, vous pouvez voir exactement à quel point cette situation a été destructrice pour le journalisme : les journalistes du monde entier ont fourni gratuitement à Twitter des informations et des commentaires en temps réel, apprenant de plus en plus à façonner des histoires pour l’algorithme plutôt que pour leurs véritables lecteurs. Pendant ce temps, les sociétés de médias pour lesquelles ils travaillaient étaient confrontées à un exode de leurs plus gros clients publicitaires vers des plateformes sociales offrant des produits publicitaires de meilleure qualité et plus intégrés, une connexion directe avec le public et aucune éthique éditoriale contraignante. Les informations sont devenues de plus en plus petites, même si les histoires ont pris de l’ampleur.” Tout le monde y était journaliste, alors que le secteur de l’information lui-même se tarissait. “Twitter a été fondé en 2006. Depuis cette année-là, l’emploi dans les journaux a chuté de 70% et les habitants de plus de la moitié des comtés américains ont peu ou plus d’informations locales”. Avec la pandémie, Trump, Black Live Matter, Twitter a atteint un point de bascule, s’effondrant sous son propre pouvoir. L’audience a commencé à refluer sous sa toxicité. Pour Patel, la prise de pouvoir de Musk sur la plateforme est une réaction au recul du pouvoir des célébrités et des gens de la tech. En renforçant sa viralité et sa toxicité, la plateforme ne cesse de péricliter. Les challengers (Bluesky, Threads, Mastodon…) sont à Twitter “ce que la méthadone est à l’héroïne”. L’audience est plus fragmentée que jamais. A l’image de ces utilisateurs qui courent encore d’une plateforme l’autre pour envoyer des messages à leurs relations… ou ces lecteurs désorientés de ne plus trouver quoi lire.

Changement générationel ou enjunkification ?**

**L’âge de la conversation qui ouvrait le web du XXIe siècle est clos ! Et ce qu’il reste de nos conversations vont être prises en charge par des agents conversationnels… qui seront des des agents politiques et idéologiques bien plus efficaces que nos semblables, comme l’explique Olivier Ertzscheid ! A terme, c’est même une relation encore plus personnelle à l’information que dessinent les chatbots, chacun discutant avec le sien sans plus vraiment avoir de liens à des contenus communs.

Pour Max Read, dans le New York Times, peut-être faut-il lire ces changements en cours autrement. Ces transformations ont aussi des origines économiques, rappelle-t-il trop rapidement. “La fin de l’ère des taux d’intérêt bas a bouleversé l’économie des start-ups, mettant fin aux pratiques de croissance rapide comme le blitzscaling et réduisant le nombre de nouvelles entreprises Internet en lice pour attirer notre attention ; des entreprises comme Alphabet et Facebook sont désormais des entreprises matures et dominantes au lieu de nouvelles entreprises perturbatrices”… Pourtant, plutôt que de creuser cette explication économique, c’est à une autre explication que Max Read se range. Si l’internet est en train de mourir, c’est d’abord parce que nous vieillissons. La forme et la culture d’internet ont été façonnés par les préférences des générations qui y ont pris part. L’internet d’aujourd’hui n’est plus celui des médias sociaux (2000-2010), ni celui des réseaux sociaux (2010-2020). “Selon le cabinet d’études de consommation GWI, le temps passé devant un écran par les millennials est en baisse constante depuis des années. Seuls 42 % des 30 à 49 ans déclarent être en ligne « presque constamment », contre 49 % des 18 à 29 ans. Nous ne sommes même plus les premiers à l’adopter : les 18 à 29 ans sont plus susceptibles d’avoir utilisé ChatGPT que les 30 à 49 ans – mais peut-être uniquement parce que nous n’avons plus de devoirs à faire.”

“Le public américain le plus engagé sur Internet ne sont plus les millennials mais nos successeurs de la génération Z. Si Internet n’est plus amusant pour les millennials, c’est peut-être simplement parce que ce n’est plus notre Internet. Il appartient désormais aux zoomers.”

Les formats, les célébrités, le langage lui-même de cette génération est totalement différent, explique Read. “Les zoomers et les adolescents de la génération Alpha qui mordillent leurs talons générationnels semblent toujours s’amuser en ligne. Même si je trouve tout cela impénétrable et un peu irritant, l’expression créative et la socialité exubérante qui ont rendu Internet si amusant pour moi il y a dix ans sont en plein essor parmi les jeunes de 20 ans sur TikTok, Instagram, Discord, Twitch et même X. Skibidi Toilet, Taxe Fanum, le rizzler – je ne me rabaisserai pas en prétendant savoir ce que sont ces mèmes, ou quel est leur attrait, mais je sais que les zoomers semblent les aimer. Ou, en tout cas, je peux vérifier qu’ils adorent les utiliser pour confondre et aliéner les millennials d’âge moyen comme moi.”

Certes, ils sont récupérés et exploités par une petite poignée de plateformes puissantes, mais d’autres avant elles ont cherché à arbitrer et à marchandiser notre activité en ligne… “Les plateformes axées sur l’engagement ont toujours cultivé les influenceurs, les abus et la désinformation. Lorsque vous approfondissez, ce qui semble avoir changé sur le Web au cours des dernières années, ce n’est pas la dynamique structurelle mais les signifiants culturels”.

“En d’autres termes, l’enjunkification a toujours eu lieu sur le web commercial, dont le modèle économique largement basé sur la publicité semble imposer une course toujours mouvante vers le bas. Peut-être que ce que les internautes frustrés, aliénés et vieillissants comme moi vivent ici, ce ne sont pas seulement les fruits d’un Internet enjunkifié, mais aussi la perte de l’élasticité cognitive, du sens de l’humour et de l’abondance de temps libre nécessaire pour naviguer avec agilité et gaieté dans tous ces déchets déroutants.”

Mais c’est là une vision très pessimiste des transformations actuelles. Pour Rolling Stone, Anil Dash s’enthousiasme. Avec sa fragmentation, l’internet est en train de redevenir bizarre, comme il l’était à l’origine ! La disparition d’applications centrales (même si ce n’est pas vraiment tout à fait le cas), promet un retour de services étranges et de propositions inattendues à l’image de l’école de la programmation poétique de Neta Bomani… ou celles du constructeur de bots Stephan Bohacek, ou encore celles du designer Elan Kiderman Ullendorff qui s’amuse à faire des propositions pour “échapper aux algorithmes“… ou encore les petites subversions de l’artiste et programmeur Darius Kazemi qui proposait aux gens de créer leurs micro-réseaux sociaux autonomes sur Mastodon…

Pas sûr que ces subversions n’aient jamais cessé. Elles ont surtout été invisibilisées par les grandes plateformes sociales. Pas sûr que l’audience d’influence et que l’audience synthétique qui s’annoncent ne leur apporte plus d’espaces qu’ils n’en avaient hier. Reste qu’Anil Dash a raison : la seule chose certaine, c’est que les contenus les plus étranges vont continuer de tenter de parvenir jusqu’à nous. A l’image des vidéos qui venaient coloniser les flux des plus jeunes depuis quelques mots clefs, que dénonçait James Bridle dans son excellent livre, Un nouvel âge des ténèbres. Elan Kiderman Ullendorff s’est amusé à créer un compte tiktok des vidéos les plus repoussantes qui lui étaient proposées en passant toutes celles qui l’intéressaient et en ne retenant que le pire. Des vidéos qui semblent composer un portrait de Dorian Gray de chacun d’entre nous. Le web addictif est le miroir du web répulsif, le web qu’on déteste le miroir du web de nos rêves. Seule certitude, oui : le web de demain risque d’être bien plus étrange et dérangeant qu’il n’est ! Les ajustements algorithmiques ayant sabré le plus intéressant, il est probable que nous soyons plus que jamais confrontés au pire !

Hubert Guillaud